|

2/11/2023 0 Comments Vertica dbschema

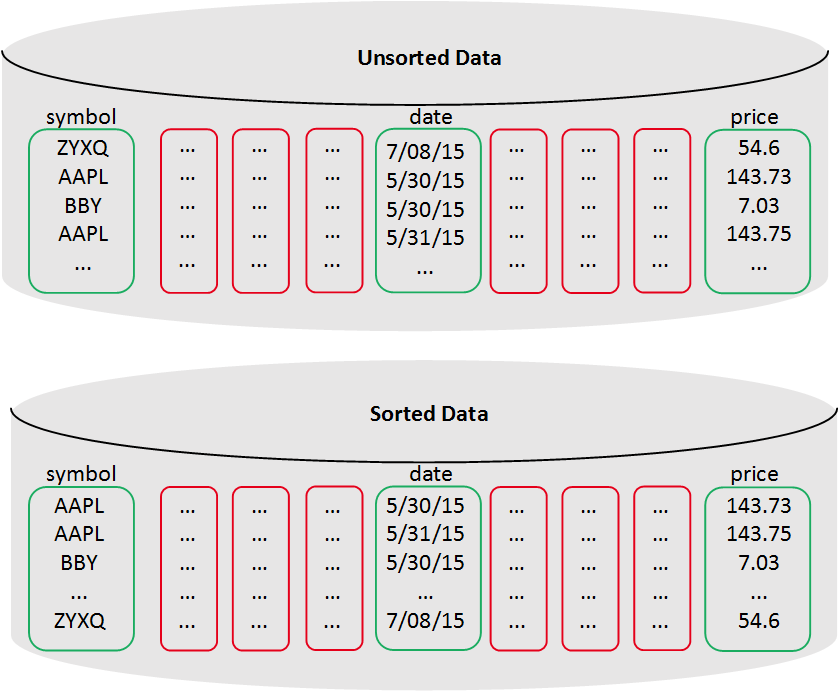

One last thing we need is a data source to provide to Spark for Vertica: val verticaDataSource = ".DefaultSource" Here we are running Spark in local mode, so we set master to the following: master = "local" Here, appName will be the name you want to set to your Spark application and master will be the master URL for Spark. The next thing is to create a Spark Session to connect with Spark and Spark-SQL: val sparkSession = SparkSession.builder() "numPartitions" -> "source.numPartitions" // Num of partitions to be created in resulting DataFrame "host" -> "host", // Host on which vertica is currently running "dbschema" -> "source.dbschema", // schema of vertica where the table will be residing "table" -> "source.table", // vertica table name It'll need the following properties: val properties: Map = Map( To read the data from Vertica, first, we have to provide some properties and credentials to access the Vertica. All required dependencies are there and we are now ready to start coding. As we are using the latest version of Spark, we'll choose the latest version of the connector.Īfter deciding which connector to use, copy the jars to the project-root/lib/ folder and add the following line to your build.sbt to add the unmanaged jars to the classpath: unmanagedJars in Compile ++= Seq( Now, for different Spark versions, there are different connectors and here we can choose which connector we have to use. Luckily, Vertica includes these jars into the package we just installed in the following paths: /opt/vertica/packages/SparkConnector/lib These jars aren't available on Maven, hence we have to manually add these jars into our SBT project. To support Vertica, we need the two following jars: Hence, the Scala version can be set 2.12.x in this project. Setupįirst, add the following dependency for Spark SQL and Spark-SQL-Kafka into your build.sbt: libraryDependencies ++= Seq( This post will be focusing on reading the data from Vertica using Spark and dumping it into Kafka. Installing it also is really easy and steps can be found in the documentation. To download Vertica and try it out, you can go to official Vertica site. Ability to store machine learning models and use them for database scoring.High performance and parallel data transfer.Support for standard programming interfaces.Standard SQL interface with many analytics capabilities built-in.The following are some of the features provided by Vertica: In this way, Vertica reads only the columns needed to answer the query, which reduces disk I/O and makes it ideal for read-intensive workloads. Now, what do we mean by 'columnar storage?' This means that Vertica stores data in a column format so it can be queried. Vertica is a columnar storage platform designed to handle large volumes of data, which enables very fast query performance in traditionally intensive scenarios. Vertica is a tool which is really helpful in working with big data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed